How llms.txt and MCP Are Changing API Discovery for Product Teams

AI agents are becoming the primary consumers of APIs, but most API documentation is invisible to them. This article breaks down how llms.txt and MCP are creating a new discovery layer for APIs, what this means for product teams, and how to make your APIs agent-readable without overhauling your existing workflow.

There is a quiet shift happening in how APIs get discovered and adopted. For the past decade, API discovery has been a human activity. A developer searches Google, lands on your documentation, reads through your getting started guide, and eventually makes their first API call. That model still works, but it is no longer the only one that matters.

AI agents are becoming a meaningful new consumer of APIs. Not in the distant future. Right now. Coding assistants like Cursor, Windsurf, and Claude Code are generating API integration code on behalf of developers. Autonomous agents are evaluating which APIs to call based on task requirements. AI-powered development workflows are making integration decisions without a human ever visiting your docs site.

The problem: most API documentation is invisible to these agents.

The Visibility Gap

Traditional API documentation is designed for humans. It uses rich HTML layouts, interactive explorers, sidebar navigation, and visual hierarchy to help developers understand and use your API. All of that is noise to an AI agent.

When an agent needs to integrate with an API, it looks for machine-readable descriptions of what the API does, what endpoints are available, what parameters they accept, and how authentication works. If that information only exists in a format designed for human consumption (HTML pages, PDFs, Postman collections without machine-readable metadata), the agent can't parse it reliably.

The result: your API gets skipped. The agent picks a competitor whose documentation is machine-readable, or it hallucinates an integration that doesn't work. Either way, you lose.

Two emerging standards are filling this gap: llms.txt and MCP (Model Context Protocol).

What Is llms.txt?

llms.txt is the simplest possible solution to the agent discovery problem. It is a plain text file hosted at the root of your documentation site (e.g., yourdomain.com/llms.txt) that provides a structured summary of your API in a format optimized for LLM context windows.

Think of it as robots.txt for AI. Where robots.txt tells search engine crawlers what they can and can't index, llms.txt tells AI agents what your API does and how to use it.

A typical llms.txt file includes:

- A plain-language description of what your API does

- Available endpoints and their purposes

- Authentication requirements

- Links to detailed documentation for each resource

- Getting started instructions optimized for LLM consumption

The format is intentionally simple. No JSON schemas, no complex markup. Just structured text that fits within an LLM's context window and gives the agent enough information to decide whether your API is relevant and how to start using it.

Why llms.txt matters for product teams

llms.txt is a passive discovery mechanism. You publish it once and agents find it when they visit your domain. There is no integration required on the agent side. Any LLM-based tool that has been trained or prompted to look for llms.txt files will pick yours up automatically.

This makes it the lowest-effort, highest-leverage change you can make to your API's agent discoverability. It takes minutes to create and requires no changes to your existing documentation infrastructure.

What Is MCP (Model Context Protocol)?

If llms.txt is passive discovery, MCP is active integration.

MCP (Model Context Protocol), originally developed by Anthropic, is a standardized protocol that lets AI agents discover and invoke API tools programmatically. Instead of just reading about your API, an agent using MCP can query a server to find out what tools (endpoints) are available, what parameters they accept, and then call them directly.

The key components of MCP:

- Tool discovery: Agents can query an MCP server to get a list of available tools with descriptions and parameter schemas

- Standardized invocation: Once discovered, tools can be called through a consistent protocol regardless of the underlying API's design

- Context management: MCP handles passing relevant context between the agent and the API, reducing hallucination and improving accuracy

How MCP differs from traditional API integration

Traditional API integration requires a developer to read documentation, understand the API's design, write integration code, handle authentication, and manage errors. MCP abstracts much of this away. The agent discovers what's available, understands the parameters from structured schemas, and invokes tools through a standard interface.

This doesn't eliminate the need for human-readable documentation. Developers still need guides, tutorials, and contextual explanations. But it creates a parallel discovery and integration path for agents that operates at machine speed.

llms.txt vs MCP: Different Tools for Different Problems

These two standards are complementary, not competing.

llms.txt answers the question: "What does this API do and is it relevant to my task?" It is a discovery and evaluation layer. An agent reads your llms.txt file, determines whether your API fits the current task, and then decides whether to proceed with integration.

MCP answers the question: "How do I actually use this API right now?" It is an integration layer. Once an agent has decided your API is relevant, MCP provides the mechanism for the agent to discover specific tools and invoke them.

In practice, the most effective setup is publishing both. llms.txt gets your API discovered. MCP gets your API used.

What This Means for Product Teams

If you manage an API product, this shift has practical implications for your documentation and developer experience strategy.

Your API has two audiences now

Human developers and AI agents have fundamentally different discovery and consumption patterns. Humans browse, scan, and read contextually. Agents parse structured data and make binary decisions about relevance. Your documentation strategy needs to serve both.

This does not mean duplicating your documentation. It means publishing your existing documentation in an additional format that agents can consume. The underlying information is the same. The format is different.

Agent-readability is becoming a competitive advantage

As more development workflows incorporate AI assistants, the APIs that agents can discover and integrate with will get chosen more often. This is not hypothetical. We are already seeing this pattern across our platform, where APIs with machine-readable documentation receive significantly more agent-driven integration requests than those without.

The dynamic is similar to what happened with SEO in the early 2000s. The companies that made their content indexable by search engines gained a distribution advantage. The ones that didn't became invisible. Agent-readability is the new SEO for APIs.

You don't need to change your existing workflow

One of the biggest misconceptions about llms.txt and MCP is that adopting them requires a documentation overhaul. It doesn't.

If you already have an OpenAPI specification or structured API reference documentation, the information needed for both llms.txt and MCP already exists in your system. It just needs to be published in an additional format.

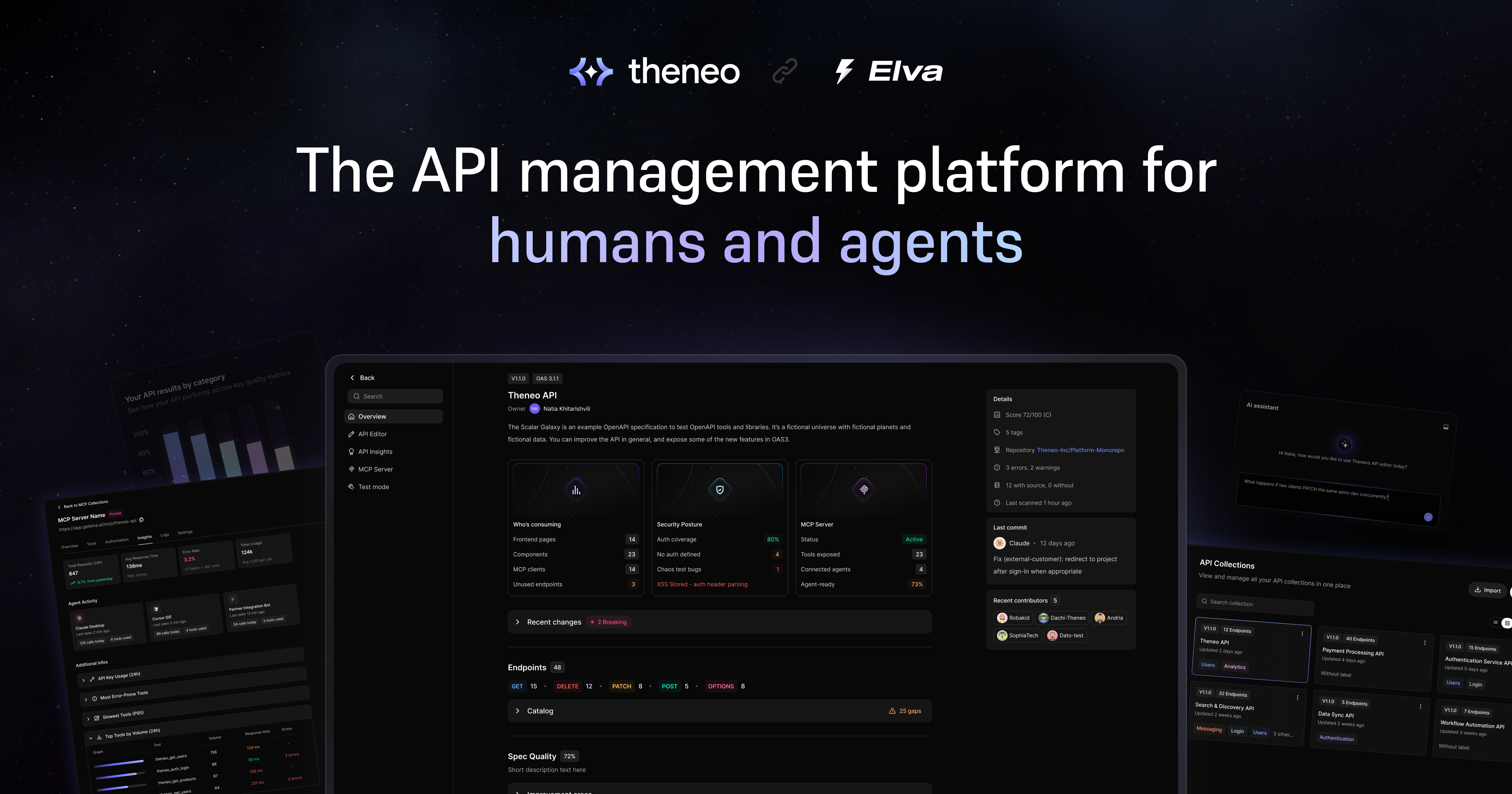

Platforms like Theneo handle this automatically. You import your existing API spec (from Postman, Swagger, or any OpenAPI source), and the platform generates llms.txt and MCP endpoints alongside your human-readable documentation. Your engineering team doesn't need to build or maintain anything new. The agent-readable layer stays in sync with your documentation as your API evolves.

How to Get Started

If you want to make your API agent-discoverable, here is a practical starting point:

1. Publish an llms.txt file

Create a plain text file that describes your API's purpose, lists your main endpoints, and includes links to detailed documentation. Host it at your documentation root. This takes less than an hour and immediately makes your API visible to any agent that checks for llms.txt.

2. Evaluate MCP support

If your API is used in contexts where agents might invoke it directly (developer tools, automation platforms, AI-assisted workflows), consider publishing an MCP server. This requires more effort than llms.txt but enables active integration rather than just passive discovery.

3. Monitor agent traffic

Start tracking requests to your llms.txt file and any MCP endpoints. Look at user-agent strings to distinguish agent traffic from human traffic. This data will help you understand how agents are discovering and using your API, and where to invest further.

4. Keep both formats in sync

The worst outcome is publishing llms.txt or MCP endpoints that describe a version of your API that no longer exists. Whatever approach you use, make sure the agent-readable layer updates automatically when your API changes. Manual processes will drift. Automated sync will not.

The Bigger Picture

The shift to agentic API discovery is not a trend that will reverse. The number of AI agents making API integration decisions is growing rapidly, and the tooling ecosystem around llms.txt and MCP is maturing quickly.

Product teams that make their APIs agent-readable now are positioning themselves for a world where a significant percentage of API discovery and integration happens without a human developer ever visiting a documentation site. The ones that wait will find themselves invisible to an increasingly important channel.

The good news: the barrier to entry is low. Publishing an llms.txt file takes minutes. Adding MCP support takes days, not months. And if you are using a documentation platform that supports both formats natively, the effort is close to zero.

The question isn't whether agents will discover and integrate APIs on behalf of developers. They already are. The question is whether your API will be one of the ones they find.

Related posts

Start creating quality API

documentation today

.jpeg)