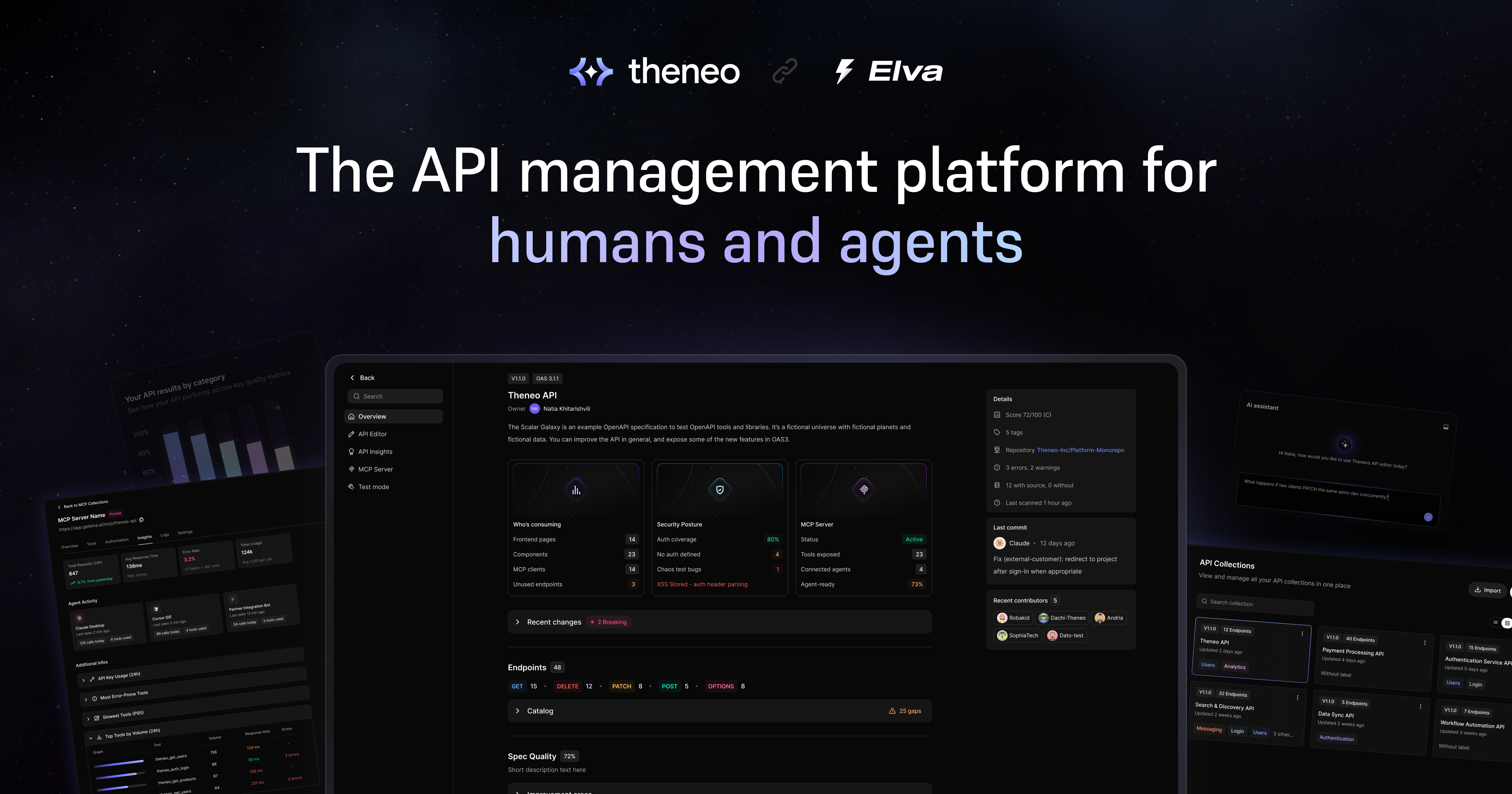

Meet Elva: The API Management Platform That Makes Your APIs Agent-Ready

Elva is Theneo's API management platform that helps teams catalog, manage, and expose their APIs to AI agents with production-grade MCP servers.

Something's shifting in how APIs get consumed, and if you manage APIs, you've probably felt it already.

We've spent years building API documentation platform that more than 18,000 companies use — from early-stage startups to Fortune 500 enterprises. But over the past year, our customers started asking a different kind of question. It wasn't "how do I make my docs better?" anymore. It was "how do I make my APIs ready for AI agents?"

By some estimates, AI agents could generate more API traffic than human developers within the next couple of years. And honestly? Most APIs aren't built for that. Specs are incomplete. Catalogs are scattered. MCP servers exist as weekend demos, not production infrastructure. Most teams don't know — endpoint by endpoint — which parts of their API surface will hold up when agents start calling at scale.

That's why we built Elva.

What Does It Take to Make an API Agent-Ready?

Short answer: more than good documentation.

You need a clean spec. You need organized collections. You need to know which endpoints are solid and which ones will break the moment an autonomous system tries to call them. And if you want agents to actually use your APIs, you need production-grade MCP servers — not the kind you hacked together in a demo, but ones with real authentication, observability, and logging.

That's what Elva is built to do. Here's how it works.

Connect Your APIs From Where They Already Live

Point Elva at a Git repository and it discovers your OpenAPI specs automatically. As your team merges changes, Elva stays in sync — no manual imports, no copy-pasting YAML files around. If Postman is your source of truth, connect your workspace and Elva pulls your collections directly.

You can also import a single spec file or generate one from scratch if you're starting fresh. But for day-to-day use, the sync paths are what scale.

No migration headaches. Just point it at your stuff and go.

Catalog Every Endpoint in One Place, Scored by Quality

If you've ever stared at a flat list of 200 endpoints wondering where to even start, that's exactly what this fixes. Elva uses AI to group your endpoints into logical collections by domain: Users, Authentication, Analytics, Messaging, and so on.

Each collection surfaces its version, endpoint count, last update, and a quality score. The weakest specs float to the top, so you know exactly where to focus your cleanup effort first. No more guessing which APIs are ready and which ones are held together with duct tape.

Manage Each API in a Dedicated Workspace

Every collection opens into its own workspace with five tabs: overview, editor, testing, insights, and MCP configuration — all in one place. The structure is the same across every API, so the muscle memory transfers.

- Overview tells you who's actually consuming the API — broken down by frontend pages, components, MCP clients, and unused endpoints, counted directly from your codebase. It also shows the security posture: auth coverage, endpoints with no auth defined, and any bugs surfaced from testing. And if you've got an MCP server running, you can see its status, connected agents, and agent-readiness percentage right here.

- The editor is where you fix what the catalog flagged. It's a full schema editor with the spec on one side and a live preview on the other, so you can patch missing descriptions, tighten request bodies, or add examples without leaving Elva. And here's the part that matters: edits sync back to the source when the API came in through Git or Postman. Elva isn't a dead-end editor — your fixes flow back to where your team actually works.

- Test mode is a built-in API client. Pick an endpoint, set headers and parameters, send the request, and inspect the response inline. Collections, paths, and components from the spec are pre-loaded in the sidebar, so you skip the copy-paste step that traditional API clients require.

- The insights tab scores your API across four dimensions — design, developer experience, AI readiness, and security — with specific issues and actionable fixes, not just abstract numbers. Each category expands into concrete recommendations you can work through one by one. And if you need to share the report with your team, the whole thing exports to PDF.

Ship Production-Grade MCP Servers, Not Demos

This is where most teams get stuck. Elva generates hosted MCP servers with built-in authentication, observability, and logging. Before anything goes live, an AI readiness panel flags tools with missing schemas so you can fix them before agents trip over them.

Each server comes with a hosted URL and a shareable install page, so users on tools like Cursor or Claude Desktop can connect in a couple of clicks.

A central dashboard shows every MCP server you're running: server name, visibility settings, tool count, and a deep link into its workspace. Drill into any server and you get total requests, response time, error rate, token usage, and per-agent activity — so you can see exactly which agents are calling, how often, and where things break.

It's what you actually need to let agents call your APIs in production — not a proof of concept, but real infrastructure.

Why Do APIs Need to Be Ready for AI Agents Now?

The API economy isn't slowing down. If anything, it's accelerating in a direction most teams haven't prepared for.

When agents become the primary consumers of your endpoints, the bar changes completely. Incomplete schemas, missing descriptions, undocumented auth flows — these aren't just annoyances for developers reading your docs. They're actual breaking points for autonomous systems trying to call your APIs programmatically.

An agent can't guess what a parameter does. It can't "figure out" your auth flow by reading a Slack thread. It needs everything spelled out, structured correctly, and accessible through a standard protocol. That's the readiness gap most teams are sitting on right now.

Elva gives you visibility into that gap and the tools to close it, endpoint by endpoint.

How Does Elva Fit With Theneo?

Think of it this way: Theneo handles your API documentation. Elva handles your API management. Together, every endpoint is covered - for the humans building with your APIs and for the agents now calling them at scale.

They're complementary, not competing. If you're already a Theneo user, the migration is automatic — no setup, no re-importing.

Want to see how Elva fits your setup? We're happy to walk you through it. Reach out to your account manager or schedule a call with us.

Frequently Asked Questions

- What is an agent-ready API?

An agent-ready API has a complete, well-structured spec, clear authentication documentation, and descriptive metadata on every endpoint. It means an AI agent can discover your API, understand what each endpoint does, authenticate properly, and make calls without a human walking it through the process. - Why can't AI agents just use existing API documentation?

They can, if the docs are good enough. The problem is most aren't. Agents need machine-readable specs, not markdown pages written for humans. Missing parameter descriptions, undocumented error codes, and vague auth instructions that a developer could muddle through will completely stop an agent in its tracks. - What is an MCP server and why does it matter for AI agents?

MCP (Model Context Protocol) is a standard that lets AI agents discover and interact with external tools and APIs. An MCP server acts as the bridge between your API and the agent trying to use it. Without one, agents have no standardized way to find your endpoints or understand how to call them. - How do I know if my APIs are ready for AI agents?

Start by auditing your OpenAPI specs. Are descriptions filled in for every endpoint, parameter, and response? Is your auth flow documented in a way a machine can parse? Are your error responses consistent and well-structured? If you're answering "not really" to any of those, you've got a readiness gap. Tools like Elva can score this automatically across your entire API surface. - What happens if my APIs aren't agent-ready?

As AI agents become a bigger share of API traffic, they'll choose the APIs they can understand and call reliably. If yours aren't in that category, you lose that traffic to competitors whose APIs are. - Do I need to rebuild my APIs to make them agent-ready?

Usually not. In most cases, it's about improving what you already have — filling in missing descriptions, cleaning up your specs, standardizing error responses, and setting up a proper MCP server. The API logic itself is probably fine. It's the metadata and discoverability layer that needs work.

Related posts

Start creating quality API

documentation today